-

chevron_right

chevron_right

Multiple Chat GPT instances combine to figure out chemistry

news.movim.eu / ArsTechnica · Wednesday, 20 December, 2023 - 19:14 · 1 minute

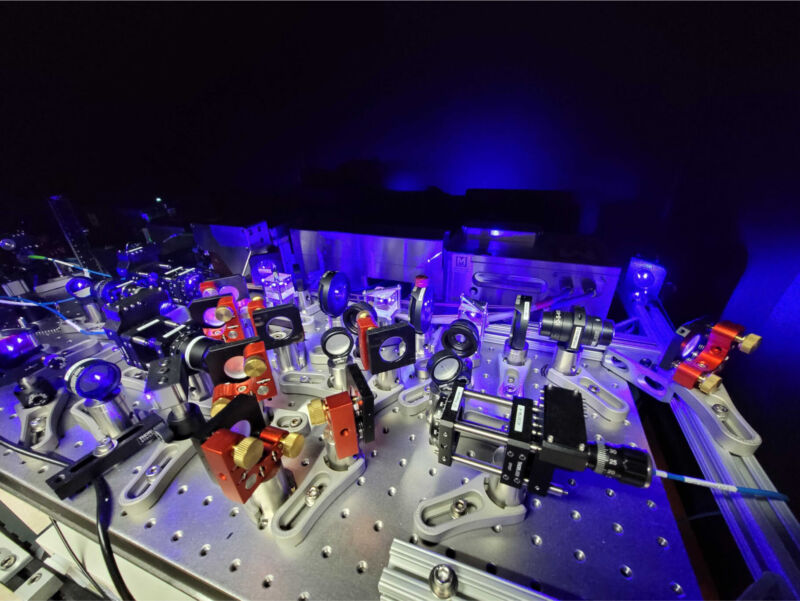

Enlarge / The lab's empty because everyone's relaxing in the park while the AI does their work. (credit: Fei Yang )

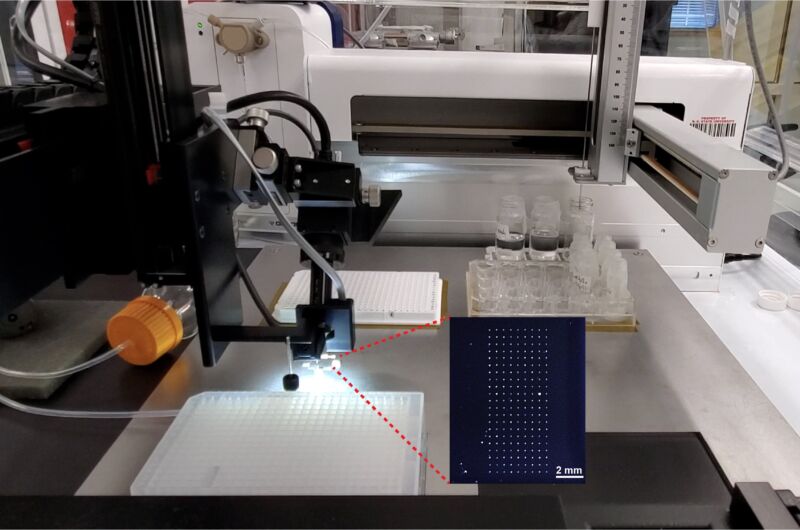

Despite rapid advances in artificial intelligence, AIs are nowhere close to being ready to replace humans for doing science. But that doesn't mean that they can't help automate some of the drudgery out of the daily grind of scientific experimentation. For example, a few years back, researchers put an AI in control of automated lab equipment and taught it to exhaustively catalog all the reactions that can occur among a set of starting materials.

While useful, that still required a lot of researcher intervention to train the system in the first place. A group at Carnegie Mellon University has now figured out how to get an AI system to teach itself to do chemistry. The system requires a set of three AI instances, each specialized for different operations. But, once set up and supplied with raw materials, you just have to tell it what type of reaction you want done, and it'll figure it out.

An AI trinity

The researchers indicate that they were interested in understanding what capacities large language models (LLMs) can bring to the scientific endeavor. So all of the AI systems used in this work are LLMs, mostly GPT-3.5 and GPT-4, although some others—Claude 1.3 and Falcon-40B-Instruct—were tested as well. (GPT-4 and Claude 1.3 performed the best.) But, rather than using a single system to handle all aspects of the chemistry, the researchers set up distinct instances to cooperate in a division of labor setup and called it "Coscientist."