-

chevron_right

chevron_right

What does it take to get AI to work like a scientist?

news.movim.eu / ArsTechnica · Tuesday, 8 August, 2023 - 18:27

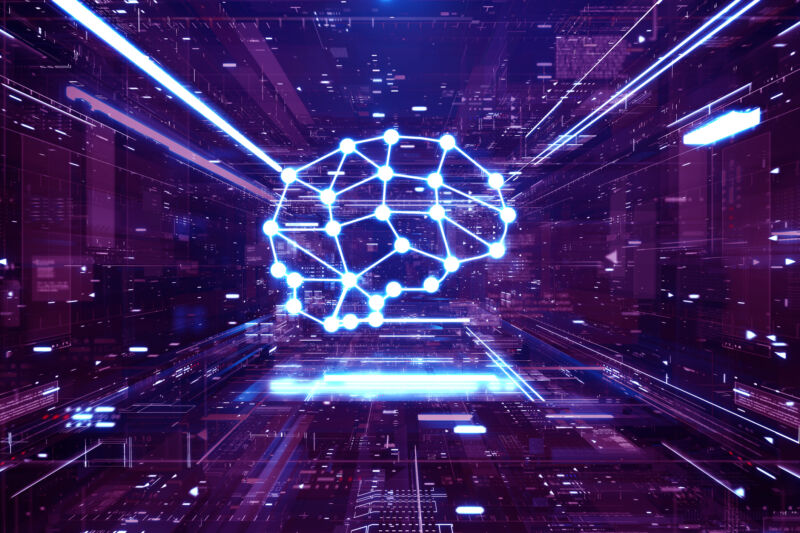

Enlarge (credit: Andriy Onufriyenko )

As machine-learning algorithms grow more sophisticated, artificial intelligence seems poised to revolutionize the practice of science itself. In part, this will come from the software enabling scientists to work more effectively. But some advocates are hoping for a fundamental transformation in the process of science. The Nobel Turing Challenge , issued in 2021 by noted computer scientist Hiroaki Kitano , tasked the scientific community with producing a computer program capable of making a discovery worthy of a Nobel Prize by 2050.

Part of the work of scientists is to uncover laws of nature—basic principles that distill the fundamental workings of our Universe. Many of them, like Newton’s laws of motion or the law of conservation of mass in chemical reactions, are expressed in a rigorous mathematical form. Others, like the law of natural selection or Mendel’s law of genetic inheritance, are more conceptual.

The scientific community consists of theorists, data analysts, and experimentalists who collaborate to uncover these laws. The dream behind the Nobel Turing Challenge is to offload the tasks of all three onto artificial intelligence.